Linear Algebra for Data Science

Linear Algebra for Data Science

Linear algebra is the branch of mathematics that deals with vectors, vector spaces, and linear transformations. Linear Algebra in data science offers essential tools for interacting with data in numerous approaches, understanding relationships between variables, performing dimensionality reduction, and solving systems of equations. Linear algebra techniques, including matrix operations and eigenvalue decomposition, are typically used for tasks like regression, clustering, and machine learning algorithms.

Importance of Linear Algebra in Data Science

Linear algebra in data science is important because of its crucial role in numerous sector components.

It forms the backbone of machine learning algorithms, enabling operations like matrix multiplication, which are essential to model training and prediction.

Linear algebra techniques facilitate dimensionality reduction, enhancing the performance of data processing and interpretation.

Eigenvalues and eigenvectors help understand data records variability, influencing clustering and pattern recognition.

Solving systems of equations is crucial for optimization tasks and parameter estimation.

Furthermore, linear algebra supports image and signal processing strategies critical in data analysis.

Proficiency in linear algebra empowers data scientists to successfully represent, control, and extract insights from data, in the end driving the development of accurate models and informed decision-making.

Representation of Problems in Linear Algebra

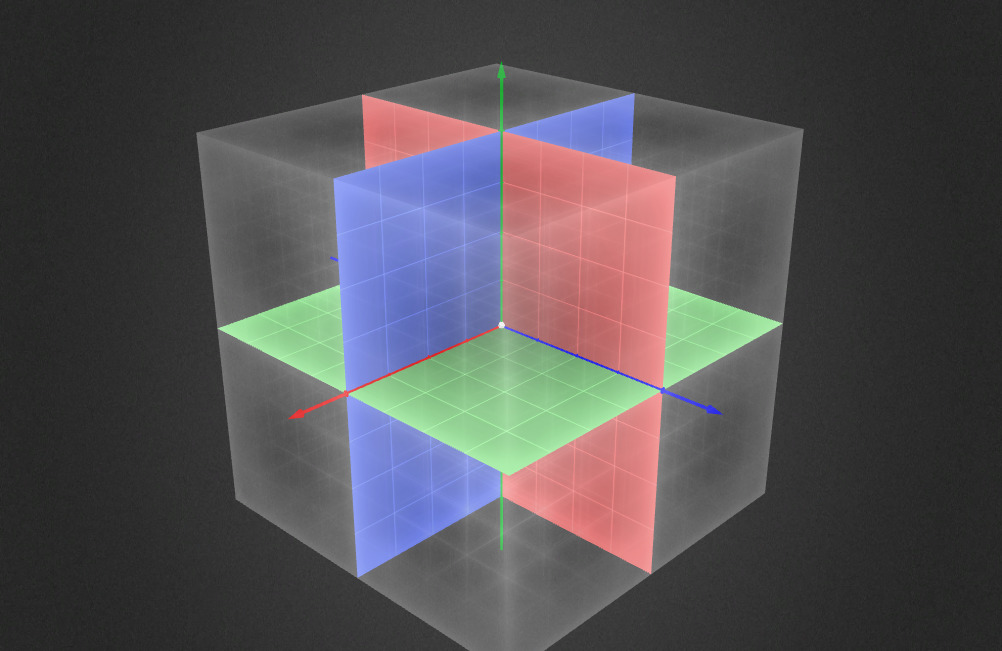

In linear algebra, problems can frequently be represented and solved using matrices and vectors.

Many real-world situations can be translated into linear equations and converted right into a matrix structure.

Additionally, problems related to transformations, scaling, rotation, and projection, can be depicted using matrices.

Data units can be represented as matrices, in which every row corresponds to an observation and each column corresponds to a characteristic.

Eigenvalues and eigenvectors offer insights into dominant patterns and adjustments inside data, assisting in tasks like dimensionality reduction and understanding variability.

The usage of matrix operations can solve linear regression problems to discover optimal coefficients.

Classification problems can also be tackled using linear algebra strategies like support vector machines, which involve mapping statistics into higher-dimensional spaces.

How is Linear Algebra used in Data Science?

Linear algebra in data science is considerably used for numerous tasks and strategies:

Data Representation: Data sets are often represented as matrices, wherein every row corresponds to an observation and every column represents a function. This matrix illustration permits efficient manipulation and data analysis.

Matrix Operations: Basic matrix operations like addition, multiplication, and transposition are used for numerous calculations, such as computing similarity measures, remodeling data, and solving equations.

Dimensionality Reduction: Singular Value Decomposition (SVD) and Principal Component Analysis (PCA) methods rely on principles from linear algebra to decrease the complexity of data while retaining critical information.

Linear Regression: Linear algebra is the base of linear regression, a widely used technique for modeling relationships between variables and depicting predictions.

Machine Learning Algorithms: Algorithms like support vector machines, linear discriminant evaluation, and logistic regression utilize linear algebra operations to build models and classify information.

Image and Signal Processing: Linear algebra strategies are vital in image processing responsibilities like filtering, compression, and edge detection. Fourier transforms, and convolutions contain linear algebra operations as well.

Optimization: Linear algebra is important for optimization algorithms utilized in machine learning, including gradient descent, based on calculating gradients.

Eigenvalues and Eigenvectors: These concepts assist in identifying dominant patterns and directions of variability in data, useful in clustering, feature extraction, and expert data characteristics.

Data Visualization: Dimensionality reduction techniques supplied through linear algebra, such as PCA, help visualize high-dimensional information in low-dimensional areas.

Solving Equations: Utilizing linear algebra techniques is a common approach to solving sets of linear equations, which emerge in scenarios involving optimization problems and the estimation of parameters.

Did you find this ICT insight helpful?