AI Driven Phishing

What is AI Driven Phishing in Cybersecurity?

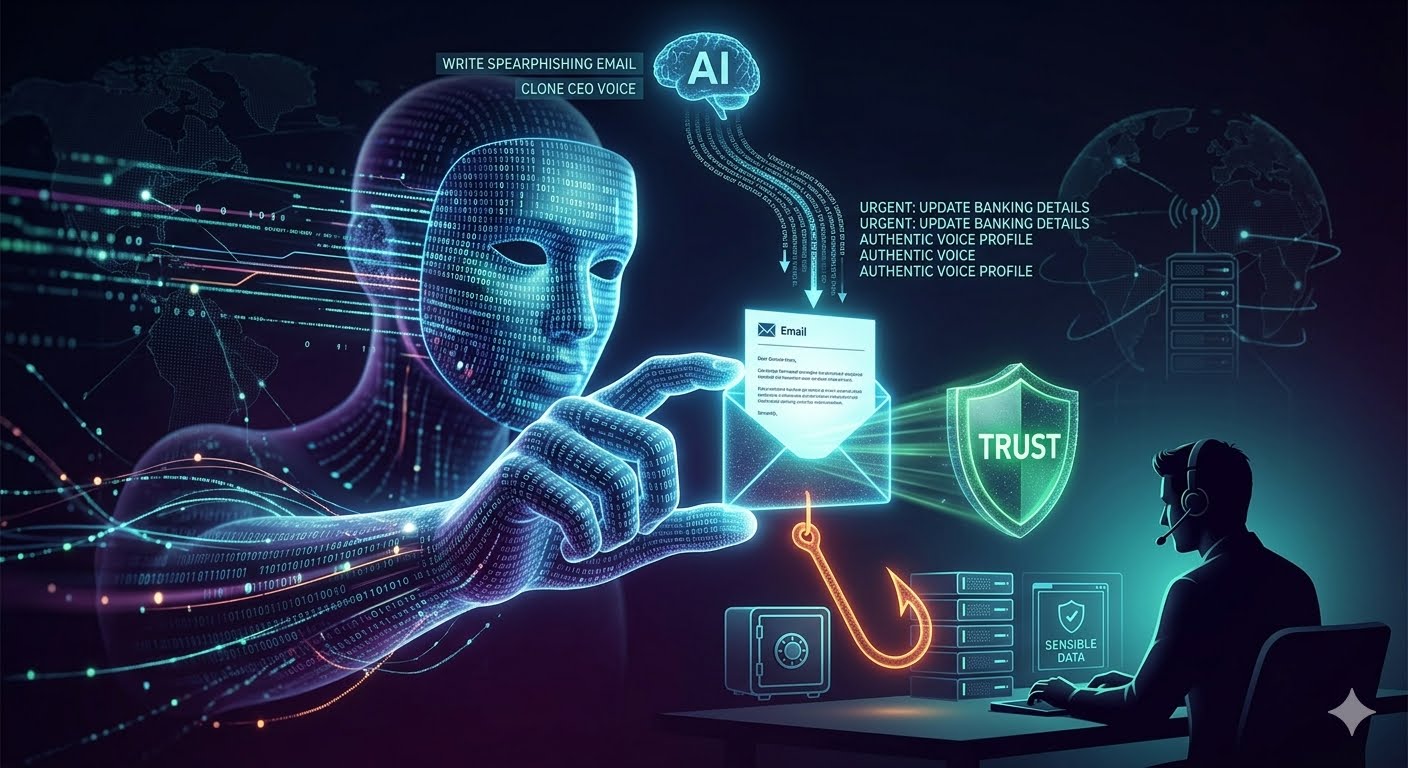

The era of "clunky" phishing—characterized by obvious spelling errors and generic greetings—is rapidly coming to an end. AI-Driven Phishing represents the weaponization of Large Language Models (LLMs) and Generative AI to create highly sophisticated, personalized, and scalable social engineering attacks that bypass traditional security filters and human intuition.

The Evolution: From "Spray and Pray" to "Spear and Scale"

Traditional phishing relied on a "spray and pray" methodology: sending millions of low-quality emails in the hope that a tiny percentage of recipients would be gullible enough to click. AI has flipped this script.

With tools like ChatGPT (and its illicit counterparts like WormGPT or FraudGPT), attackers can now conduct "Spear Phishing" at the scale of a mass campaign. AI can analyze vast amounts of publicly available data from LinkedIn, social media, and corporate websites to craft messages that are contextually relevant to the victim. It can mimic the specific writing style of a CEO, use industry-specific jargon, and reference recent company events, making the deception nearly indistinguishable from a legitimate internal communication.

The Mechanics of AI-Driven Attacks

AI has enhanced every stage of the phishing lifecycle:

1. Perfected Language and Localization

One of the easiest ways to spot a phish used to be poor grammar or awkward phrasing, often a result of non-native speakers using translation tools. AI removes this "red flag." It can generate perfect, professional prose in dozens of languages, allowing cybercriminals to expand their reach into foreign markets with the same level of persuasiveness as a native speaker.

2. Deepfakes: Beyond the Inbox

AI-driven phishing is no longer limited to text. Business Email Compromise (BEC) has evolved into Business Communication Compromise.

Voice Deepfakes (Vishing): Using just a few seconds of a person's voice recorded from a YouTube video or a podcast, AI can clone a voice to make a phone call to an employee, posing as a frantic executive requesting an urgent wire transfer.

Video Deepfakes: During virtual meetings (like Zoom or Teams), attackers can now use real-time deepfake filters to impersonate high-level officials. In a notable 2024 case, a finance worker in Hong Kong was tricked into paying out $25 million after attending a video call with what he thought were his "colleagues," all of whom were AI-generated deepfakes.

3. Dynamic Credential Harvesting

AI can build adaptive phishing sites. Instead of a static fake login page, an AI-driven site can change its appearance in real-time based on the user's browser, location, or the specific email they clicked. This bypasses static "URL reputation" databases that security software uses to block known malicious sites.

The Threat to Modern Defense Systems

AI phishing is specifically designed to defeat the two pillars of modern defense: Technical Filters and Employee Training.

Bypassing SEGs: Secure Email Gateways (SEGs) look for "signatures" of known attacks. Because AI can generate a unique, one-of-a-kind message for every single recipient, there is no "signature" to detect. The message is technically "clean"—it contains no malware, just a persuasive call to action.

Eroding Human Intuition: Most Security Awareness Training (SAT) teaches employees to look for urgency and errors. When an AI creates a calm, professional, and error-free request that perfectly matches a real business process, the "human firewall" often fails.

Defending Against the AI Wave

As attackers use AI to sharpen their spears, defenders must use AI to thicken their shields.

1. AI-Powered Email Security

Organizations are moving toward Integrated Cloud Email Security (ICES). These systems use machine learning to build a "baseline" of normal communication patterns. If an email arrives that is technically valid but uses a tone or request type that is statistically "unlikely" for that specific sender, the system flags it as an anomaly.

2. Moving Toward Phishing-Resistant MFA

Traditional Multi-Factor Authentication (MFA) that uses SMS codes or push notifications is still vulnerable to "MFA Fatigue" or proxy-based phishing. The gold standard is now FIDO2/WebAuthn (Passkeys), which uses hardware-backed cryptography to ensure that a user can only log in to a legitimate site, making it physically impossible for a phishing site to "intercept" the credentials.

3. Redefining "Trust" in Communication

Companies must implement strict out-of-band verification policies. If an "executive" makes an unusual request via voice or video call, employees must be trained to verify the request through a secondary, pre-approved channel (like a specific internal chat app) before taking action.

Conclusion: The Arms Race

AI-driven phishing has turned cybersecurity into an AI vs. AI arms race. The advantage currently sits with the attackers, who can experiment with new models without the constraints of ethics or regulations. However, by adopting "Zero Trust" principles and shifting toward cryptographic authentication methods, organizations can mitigate the impact of even the most sophisticated AI deceptions.

The goal is no longer to teach employees to "spot the phish"—it is to build systems where even if a human is tricked, the technology prevents the breach.

Did you find this ICT insight helpful?